So… what is media psychology anyway?

Does playing violent video games increase aggression?[1]No, but violent video game research kinda does. What makes advertisements persuasive?[2]David Hasselhoff, obvs. Are 5%25%75% of the population addicted to social media?[3]It’s almost like humans have a fundamental need for social interactions. Who are these people that watch porn?[4]Literally everyone everywhere Why do we enjoy cat videos so much?[5]WHY???

These are the typical research questions media psychologists are concerned with. Broadly, media psychology describes and explains human behavior, cognition, and affect with regards to the use and effects of media and technology. Thus, it’s a hybrid discipline that borrows heavily from social, cognitive, and educational psychology in both its theoretical approaches and empirical traditions. The difference between a social psychologist and a media psychologist that both study video game effects is that the former publishes their findings in JPSP while the latter designs “What Twilight character are you?” self-tests[6]TEAM EDWARD FTW! for perezhilton.com to avoid starving. And so it goes.

New is always better

A number of media psychologists is interested in improving psychology’s research practices and quality of evidence. Under the editorial oversight of Nicole Krämer, the Journal of Media Psychology (JMP), the discipline’s flagship[7]By “flagship” I mean one of two journals nominally dedicated to this research topic, the other being Media Psychology. It’s basically one of those People’s Front of Judea vs. Judean People’s Front situations. journal, not only signed the Transparency and Openness Promotion Guidelines, it has also become one of roughly fifty journals that offer the Registered Reports format.

To promote preregistration in general and the new submission format at JMP in particular, the journal launched a fully preregistered Special Issue on “Technology and Human Behavior” dedicated exclusively to empirical work that employs these practices. Andy Przybylski[8]Who, for reasons I can’t fathom, prefers being referred to as a “motivational researcher” and I were fortunate enough to be the guest editors of this issue.

The papers in this issue are nothing short of amazing – do take a look at them even if it is outside of your usual area of interest. All materials, data, analysis scripts, reviews, and editorial letters are available here. I hope that these contributions will serve as an inspiration and model for other (media) researchers, and encourage scientists studying media to preregister designs and share their data and materials openly.

Media Psychology BC[9]Before Chambers

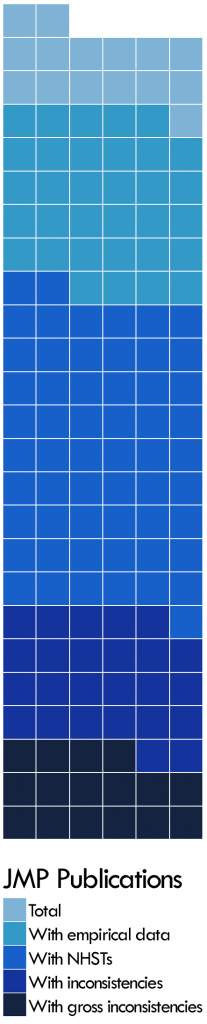

If you already suspected that in all this interdisciplinary higgledy-piggledy, media psychology did not only inherit its parent disciplines’ merits, but also some of their flaws, you’re probably[10]unerring-absolute-100%-pinpoint-unequivocally-no-ifs-and-buts-dead-on-the-money correct. Fortunately, Nicole was kind enough to allot our special issue editorial more space than usual in order to report a meta-scientific analysis of the journal’s past and to illustrate how some of the new practices can ameliorate the evidential value of research. For this reason, we surveyed a) availability of data, b) errors in the reporting of statistical analyses, and c) sample sizes and statistical power of all 147 studies in N = 146 original research articles published in JMP between volume 20/1, when it became an English-language publication, and volume 28/2 (the most recent issue at the time this analysis was planned). This blog post is a summary of the analyses in our editorial, which — including its underlying raw data, analysis code, and code book — is publicly available at https://osf.io/5cvkr/.

Availability of Data and Materials

Historically the availability of research data in psychology has been poor. Our sample of JMP publications suggests that media psychology is no exception to this, as we were not able to identify a single publication reporting a link to research data in a public repository or the journal’s supplementary materials.

Statistical Reporting Errors

A recent study by Nuijten et al. (2015) indicates a high rate of reporting errors in reported Null Hypothesis Significance Tests (NHSTs) in psychological research reports. To make sure such inconsistencies were avoided for our special issue, we validated all accepted research reports with statcheck 1.2.2, a package for the statistical programming language R that works like a spellchecker for NHSTs by automatically extracting reported statistics from documents and recomputing[11]p-values are recomputed from the reported test statistics and degrees of freedom. Thus, for the purpose of recomputation, it is assumed that test statistics and degrees of freedom are correctly reported, and that any inconsistency is caused by errors in the reporting of p-values. The actual inconsistencies, however, can just as well be caused by errors in the reporting of test statistics and/or degrees of freedom. p-values.

For our own analyses, we scanned all nemp = 131 JMP publications reporting data from at least one empirical study (147 studies in total) with statcheck to obtain an estimate for the reporting error rate in JMP. Statcheck extracted a total of 1036 NHSTs reported in nnhst = 98 articles. Forty-one publications (41.8% of nnhst) reported at least one inconsistent NHST (max = 21), i.e. reported test statistics and degrees of freedom did not match reported p-values. Sixteen publications (16.3% of nnhst) reported at least one grossly inconsistent NHST (max = 4), i.e. the reported p-value is < .05 while the recomputed p-value is > .05, or vice-versa. Thus, a substantial proportion of publications in JMP seem to contain inaccurately reported statistical analyses, of which some might affect the conclusions drawn from them (see Figure 1).

Caution is advised when speculating about the causes of the inconsistencies. Many of them are probably clerical errors that do not alter the inferences or conclusions in any way.[13]For example, in 20 cases the authors reported p = .000, which is mathematically impossible (for each of these precomputed < .001). Other inconsistencies might be explained by authors not declaring that their tests were one-tailed (which is relevant for their interpretation). However, with some concern, we observe it is unlikely to be the only cause, as in 19 out of 23 cases the reported p-values were equal to or smaller than .05 while the recomputed p-values were larger than .05, whereas the opposite pattern was observed in only four cases. Indeed, if incorrectly reported p-values resulted merely from clerical errors, we would expect inconsistencies in both directions to occur at approximately equal frequencies.

All of these inconsistencies can easily be detected using the freely available R package statcheck or, for those who do not use R, in your browser via www.statcheck.io.

Sample Sizes and Statistical Power

High statistical power is paramount in order to reliably detect true effects in a sample and, thus, to correctly reject the null hypothesis when it is false. Further, low power reduces the confidence that a statistically significant result actually reflects a true effect. A generally low-powered field is more likely to yield unreliable estimates of effect sizes and low reproducibility of results. We are not aware of any previous attempts to estimate average power in media psychology.

Strategy 1: Reported power analyses. One obvious strategy for estimating average statistical power is to examine the reported power analyses in empirical research articles. Searching all papers for the word “power” yielded 20 hits and just one of these was an article that reported an a priori determined sample size.[14]In the 19 remaining articles power is mentioned, for example, to either demonstrate observed or post-hoc power (which is redundant with reported NHSTs), to suggest larger samples should be used in future research, or to explain why an observed nonsignificant “trend” would in fact be significant had the statistical power been higher.

Strategy 2: Analyze power given sample sizes. Another strategy is to examine the power for different effect sizes given the average sample size (S) found in the literature. The median sample size in JMP is 139 with a considerable range across all experiments and surveys (see Table 1). As in other fields, surveys tend to have healthy sample sizes apt to reliably detect medium to large relationships between variables.

For experiments (including quasi-experiments), the outlook is a bit different. With a median sample size per condition/cell of 30.67, the average power of experiments published in JMP to detect small differences between conditions (d = .20) is 12%, 49% for medium effects (d = .50), and 87% for large effects (d = .80). Even when assuming that the average effect examined in the media psychological literature could be as large as those in social psychology (d = .43), our results indicate that the chance that an experiment published in JMP will detect them is 38%, worse than flipping a coin.[15]An operation that would be considerably less expensive.

| Design | n | MDS | MDs/cell | 1-ßr=.1/d=.2 | 1-ßr=.3/d=.5 | 1-ßr=.5/d=.8 |

|---|---|---|---|---|---|---|

| Cross-sectional surveys | 35 | 237 | n/a | 44% | > 99% | > 99% |

| Longitudinal surveys | 8 | 378.50 | n/a | 49% | > 99% | > 99% |

| Experiments | 93 | 107 | 30.67 | 12% | 49% | 87% |

| Between-subects | 71 | 119 | 30 | 12% | 48% | 86% |

| Mixed design | 12 | 54 | 26 | 11% | 42% | 81% |

| Within-subjects | 10 | 40 | 40 | 23% | 87% | > 99% |

Table 1. Sample sizes and power of studies published in JMP volumes 20/1 to 28/2.

n = Number of published studies; MDS = Median sample size; MDs/cell = Median sample size per condition; 1-ßr=.1/d=.2 / 1-ßr=.3/d=.5 / 1-ßr=.5/d=.8 = Power to detect small/medium/large bivariate relationships/differences between conditions.

For between-subjects, mixed designs, and total we assumed independent t-tests. For within-subjects designs we assumed dependent t-tests. All tests two-tailed, α = .05. Power analyses were conducted with the R package pwr 1.20

Feeling the Future of Media Psychology

The above observations could lead readers to believe that we are concerned about the quality of publications in JMP in particular. If anything, the opposite is true, as this journal recently committed itself to a number of changes in its publishing practices to promote open, reproducible, high-quality research. These analyses are simply another step in a phase of sincere self-reflection. Thus, we would like these findings, troubling as they are, to be taken not as a verdict, but as an opportunity for researchers, journals, and organizations to reflect similarly on their own practices and hence improve the field as a whole.

One key area which could be improved in response to these challenges is how researchers create, test, and refine psychological theories used to study media. Like other psychology subfields, media psychology is characterized by frequent emergence of new theories which purport to explain phenomena of interest.[16]As James Anderson recently put it in a very clever paper (as usual): “Someone entering the field in 2014 would have to learn 295 new theories the following year.” This generativity may, in part, be a consequence of the fuzzy boundaries between exploratory and confirmatory modes of social sciences research.

Both modes of research – confirming hypotheses and exploring uncharted territory – benefit from preregistration. Drawing this distinction helps the reader determine which hypotheses carefully test ideas derived from theory and previous empirical studies, and it liberates exploratory research from the pressure to present an artificial hypothesis-testing narrative.

As technology experts, media psychology researchers are well positioned to use and study new tools that shape our science. A range of new web-based platforms have been built by scientists and engineers at the Center for Open Science, including their flagship, the OSF, and preprint services like PsyArXiv. Designed to work with scientists’ existing research flows, these tools can help prevent data loss due to hardware malfunctions, misplacement,[17]Including mindless grad students and hungry dogs or relocations of researchers, while enabling scientists to claim more credit by allowing others to use and cite their materials, protocols, and data. A public repository for media psychology research materials is already in place.

Like psychological science as a whole, media psychology faces a pressing credibility gap. Unlike some other areas of psychological inquiry,[18]such as meta science however, media research — whether concerning the Internet, video games, or film — speaks directly to everyday life in the modern world. It affects how the public forms their perceptions of media effects, and how professional groups and governmental bodies make policies and recommendations. In part because it is key to professional policy, empirical findings disseminated to caregivers, practitioners, and educators should be built on an empirical foundation with sufficient rigor.

We are, on balance, optimistic that media psychologists can meet these challenges and lead the way for psychologists in other areas. This special issue and the registered reports submission track present an important step in this direction and we thank the JMP editorial board, our expert reviewers, and of course, the dedicated researchers who devoted their limited resources to this effort.

The promise of building an empirically-based understanding of how we use, shape, and are shaped by technology is an alluring one. We firmly believe that incremental steps taken towards scientific transparency and empirical rigor will help us realize this potential.

If you read this entire post, there’s a 97% chance you’re on Team Edward.

Footnotes

| ↑1 | No, but violent video game research kinda does. |

|---|---|

| ↑2 | David Hasselhoff, obvs. |

| ↑3 | It’s almost like humans have a fundamental need for social interactions. |

| ↑4 | Literally everyone everywhere |

| ↑5 | WHY??? |

| ↑6 | TEAM EDWARD FTW! |

| ↑7 | By “flagship” I mean one of two journals nominally dedicated to this research topic, the other being Media Psychology. It’s basically one of those People’s Front of Judea vs. Judean People’s Front situations. |

| ↑8 | Who, for reasons I can’t fathom, prefers being referred to as a “motivational researcher” |

| ↑9 | Before Chambers |

| ↑10 | unerring-absolute-100%-pinpoint-unequivocally-no-ifs-and-buts-dead-on-the-money |

| ↑11 | p-values are recomputed from the reported test statistics and degrees of freedom. Thus, for the purpose of recomputation, it is assumed that test statistics and degrees of freedom are correctly reported, and that any inconsistency is caused by errors in the reporting of p-values. The actual inconsistencies, however, can just as well be caused by errors in the reporting of test statistics and/or degrees of freedom. |

| ↑12 | Hmm… waffles. |

| ↑13 | For example, in 20 cases the authors reported p = .000, which is mathematically impossible (for each of these precomputed < .001). Other inconsistencies might be explained by authors not declaring that their tests were one-tailed (which is relevant for their interpretation). |

| ↑14 | In the 19 remaining articles power is mentioned, for example, to either demonstrate observed or post-hoc power (which is redundant with reported NHSTs), to suggest larger samples should be used in future research, or to explain why an observed nonsignificant “trend” would in fact be significant had the statistical power been higher. |

| ↑15 | An operation that would be considerably less expensive. |

| ↑16 | As James Anderson recently put it in a very clever paper (as usual): “Someone entering the field in 2014 would have to learn 295 new theories the following year.” |

| ↑17 | Including mindless grad students and hungry dogs |

| ↑18 | such as meta science |