Today we are hosting a guest blog by Pieter and Tom, who tell us about their round-shaped and spiky experiences working on a preregistered direct replication.

A typical scientific paper focuses on the cold, hard facts, and tends to ignore the “human side” of the research process; the mistakes, the disappointments, the struggles,… In this blogpost, we offer you a peak behind the curtain of our recent contribution (published article, preprint), talking about the ups and downs of sequential testing, and our perspective on replication.

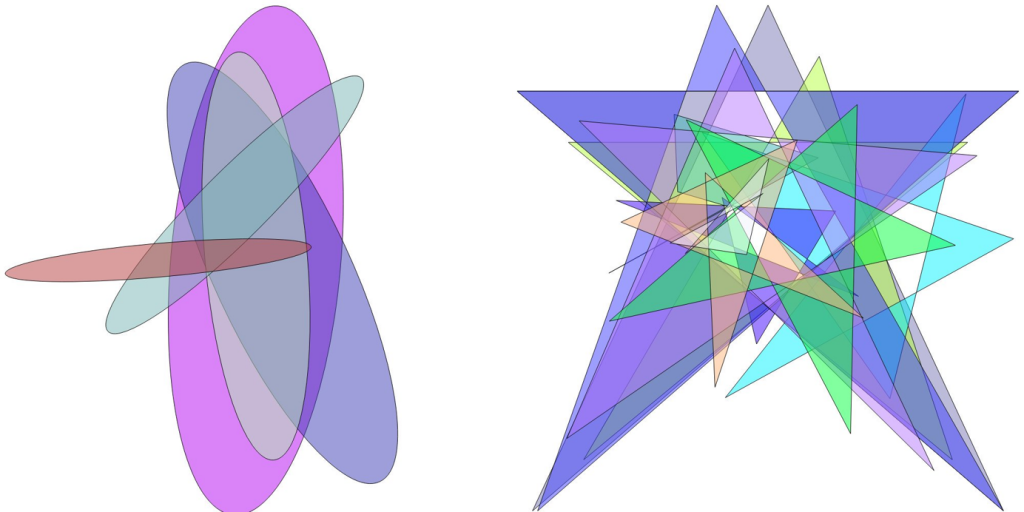

About two years ago, we saw an interesting study by Hung, Styles, and Hsieh (2017), published in Psychological Science, on the bouba–kiki effect, which is the observation that people readily associate the nonword bouba with a round shape, and kiki with a sharp one.

Hung and colleagues found that the effect even manifests itself when the stimuli are invisible! In particular, when sound-shape stimuli were suppressed from awareness by concurrently presenting rapidly flashing, colorful rectangles to the participants’ dominant eye, congruent combinations (e.g., kiki surrounded by a sharp shape) were found to break suppression faster compared to incongruent combinations (e.g., bouba surrounded by a sharp shape; Experiment 1, see Figure 2). This result led the authors to conclude that sound-shape mapping occurred during stimulus invisibility, and that this type of integration therefore does not require visual awareness of the stimuli.

It may sound like a bold claim, but many studies in the unconscious visual processing literature have actually been pushing the boundaries of what people are supposedly capable of in the absence of awareness. Until recently, the status quo was that complex visual or cognitive processes could happen without awareness. Slowly, replication studies began to provide some counterweight, calling into question the credibility of these findings. In fact, of all the studies using the continuous flash suppression masking technique, Hung and colleagues’ is one of the few whose claims regarding complex visual processing of invisible stimuli remain unchallenged.

Sounds like a worthy replication target, doesn’t it?

Enticed by the launch of a new article type in Psychological Science called preregistered direct replications, we put our money where our mouth was. Besides replicating Hung et al.’s first experiment, we also included a “manipulation check” of our set-up in the form of a face inversion effect. This is the repeatedly-replicated phenomenon that upright faces become visible faster than faces presented upside down. In case we would fail to replicate the bouba-kiki effect, we wanted to be able to show that the robust face inversion effect could be obtained. We also decided to perform a Bayesian hypothesis test after having collected data from 90 participants, and, if necessary, add participants in batches of 10 until we had obtained sufficient evidence (i.e., sequential testing). That is, a Bayes factor >= 6 would be evidence for replicating the effect whereas a Bayes factor <= 1/6 would be more consistent with the absence of an effect. Alternatively, we would stop data collection at the pre-specified maximum of 180 participants. Okay, now that you’re all up to speed, let’s lift the curtains, shall we?

In the paper, and our preceding introduction, we don’t mention the interesting journey to get to that point. For instance, why those particular evidential thresholds? Because we were requested to lower them (yes, really!). It also does not say that we increased our minimal sample size from 60 to 90 at the last minute, due to a correction (of a correction) to the original article. Nor does it talk about the data collection rollercoaster ride. All you see as a reader is a dry description of the final sample size and the corresponding results. This might give one the impression that it was smooth sailing all the way. It wasn’t; far from it.

The sequential testing procedure in particular was rather unfortunate, to say the least. On a couple of occasions, we came agonizingly close to hitting our evidential threshold. Right before the end of the fall semester in 2018, we thought we could wrap up after reaching the Bayes factor threshold with 100 participants, only to find out that one participant had to be removed based on an a priori exclusion criterion. But surely that one additional participant needed for the next checkpoint would not make that big of a difference, right? The datagod(s) decided otherwise; happy holidays y’all! Our faith and patience was tested once more, a couple of months later, when roughly the same thing happened at N = 150. As a final cosmic taunt, the Bayes factor crossed the threshold again, precisely at N = 180, the sample size at which data collection would have terminated anyway.

So, which boundary did we hit? Scroll down and have a look at Figure 3 to find out!

To us, it still feels odd that there is such a thin line between concluding “it replicates!” and “the data appears to be uninformative”. Ironically, with the initial minimal sample size of 60, data collection would have stopped immediately, resulting in the exact same conclusion we ended up drawing 6 months and 120 participants later. Well, not exactly the same conclusions. In a further twist of fate, the results of the face inversion effect control experiment were very much… unanticipated. Scroll down and have a look at Figure 4 to find out.

We needed 110 participants before we could say “it replicated!” (based on the Bayes factor criterion). This was a huge surprise to us. We think this is an interesting example of a well-known, seemingly robust effect that turns out to be not so easy to obtain. How should we interpret this? Is the literature biased? Is there a file drawer of studies that did not easily replicate this face inversion effect? In an earlier study, we obtained a face inversion effect (with different stimuli) testing only 8 (!) participants resulting in a BF > 100 (Heyman & Moors, 2014). Thus, our current hypothesis is that this result reveals that the face inversion effect is much more heterogeneous than previously thought and that we picked a particular set of stimuli that was not particularly well-suited to reveal a face inversion effect.

On the surface, this replication effort has yielded a positive outcome. We directly tested a finding published in Psychological Science, and it replicated. We also tested a classic effect, and it replicated. Yay for replication, right?

Still, if you would ask us now: “Do you believe this bouba-kiki effect?”. We would respond “no”. Well, it depends on what the question implies. Do you believe that this particular experimental effect will replicate over and over? Yes, maybe, it depends. Do you believe that this particular experimental effect can be interpreted as evidence for unconscious sound-shape mapping and awareness? No.

So, what was the point of doing this study then?

Well, we believe it made sense to first examine the replicability of the reported effect per se, before embarking on examining the boundary conditions or producing a theoretically more informative replication study. Rather than changing a host of factors, we opted to mimic the original study as closely as possible, else we would be left scrambling to explain a potential “failed” replication. Examining the boundary conditions of this now-replicated effect is precisely what should be done next to learn more about the nature of the phenomenon in question. For example, Hung et al.’s study as well as ours did not employ several control conditions that are common in the literature (i.e., so-called binocular and monocular control conditions where perceptual suppression is “simulated”). A bouba-kiki effect in these conditions could indicate that the effect is mostly due to decisional factors, rather than some form of unconscious processing. Relatedly, our replication only included stimuli in which the words are legible. Thus, we can not rule out the possibility that the exact shape of the letters contributed to the congruency effect rather than the sound-shape mapping.

Despite the replication being a success, our conclusion may sound a little grim. That said, we did learn valuable lessons along the way. For one, replication studies require a lot of work. We can not stress enough that this is a relatively simple, experimental study, yet approximately 10 months passed between receiving Stage 1 acceptance, and thanking the last participant for her efforts. By far the longest and most laborious data collection process we had been a part of – though we realize that we are in no position to complain when comparing to other domains or projects. When time and money is of no concern, the unfortunate trajectory might merely be a funny anecdote. However, many researchers, especially those on temporary contracts don’t have that luxury. After all, a publication, especially one in a highly regarded journal like Psychological Science, can make a big difference in the academic job hunt.

Would we do it differently, were we to conduct a follow-up study? Would we still opt for a Registered Report? Maybe. In the end, publication is guaranteed regardless of study outcome, but you also relinquish control regarding the start of data collection. For example, in our case, the timing of the Stage 1 acceptance letter did not concur with the availability of potential participants. Would we still opt for a sequential testing procedure? Maybe. It is generally more efficient in terms of resources, and we don’t expect lightning to strike twice. However, was it really lightning or is the trajectory we observed “normal”? Also, the very nature of sequential testing, whether in a Bayesian or a frequentist framework, makes it much more explicit that simply removing one datapoint can dramatically alter the conclusion of a study.

Make no mistake, the issues we raise here are by no means insurmountable, and pale in comparison to the obstacles other researchers might face. Nevertheless, we hope that sharing our experiences might be helpful to those considering a Registered Report – with or without sequential testing.

Preview picture of this blog post: Tip of the Iceberg — Image by © Ralph A. Clevenger/CORBIS, CC BY-NC 2.0